CS194-26 Project 1: Images of the Russian Empire

Brief Project Overview

Sergei Mikhailovich Prokudin-Gorskii was a Russian chemist and photographer who gained special permission form the Tzar to travel around Russia photographing the Empire in color. However, this was in 1907, before color photography existed. Sergei was able to reproduce color on black and white film by taking three exposures of the same scene with red, green, and blue filters in rapid succession. Sergei envisioned special 3-color projectors would be created that would allow the original scene to be reconstructed from three black and white slides.

The Library of Congress hosts the Prokudin-Gorskii collection online, and has gone through painstaking lengths to recreate the original images as perfectly as possible. The goal of this project is to develop a script that can produce images with very few visual artefacts very quickly.

Approach

We first begin by implementing a utility function used to align images. The utility function takes in a reference image and an image to align and compares them for similarity. It scans every possible displacement within a given window (I used -15px, 15px to start). I used the Sum of Squared Differences metric to determine how closely aligned the images were- the smaller the better.

We first begin by implementing an image pyramid - a sort of recursive image sorting structure. At each level, we half the size of the image and double the size of our search window. We search the smallest image first to solve for an initial displacement. Then we go a layer up and apply that displacement before repeating the process.

We first begin by implementing an image pyramid - a sort of recursive image sorting structure. At each level, we half the size of the image and double the size of our search window. We search the smallest image first to solve for an initial displacement. Then we go a layer up and apply that displacement before repeating the process.

Initial Results

The initial results were very good on most of the images in the dataset. I was amazed at how crisp and clear the aligned images became. The results of my aligning of the self portrait image are shown below.

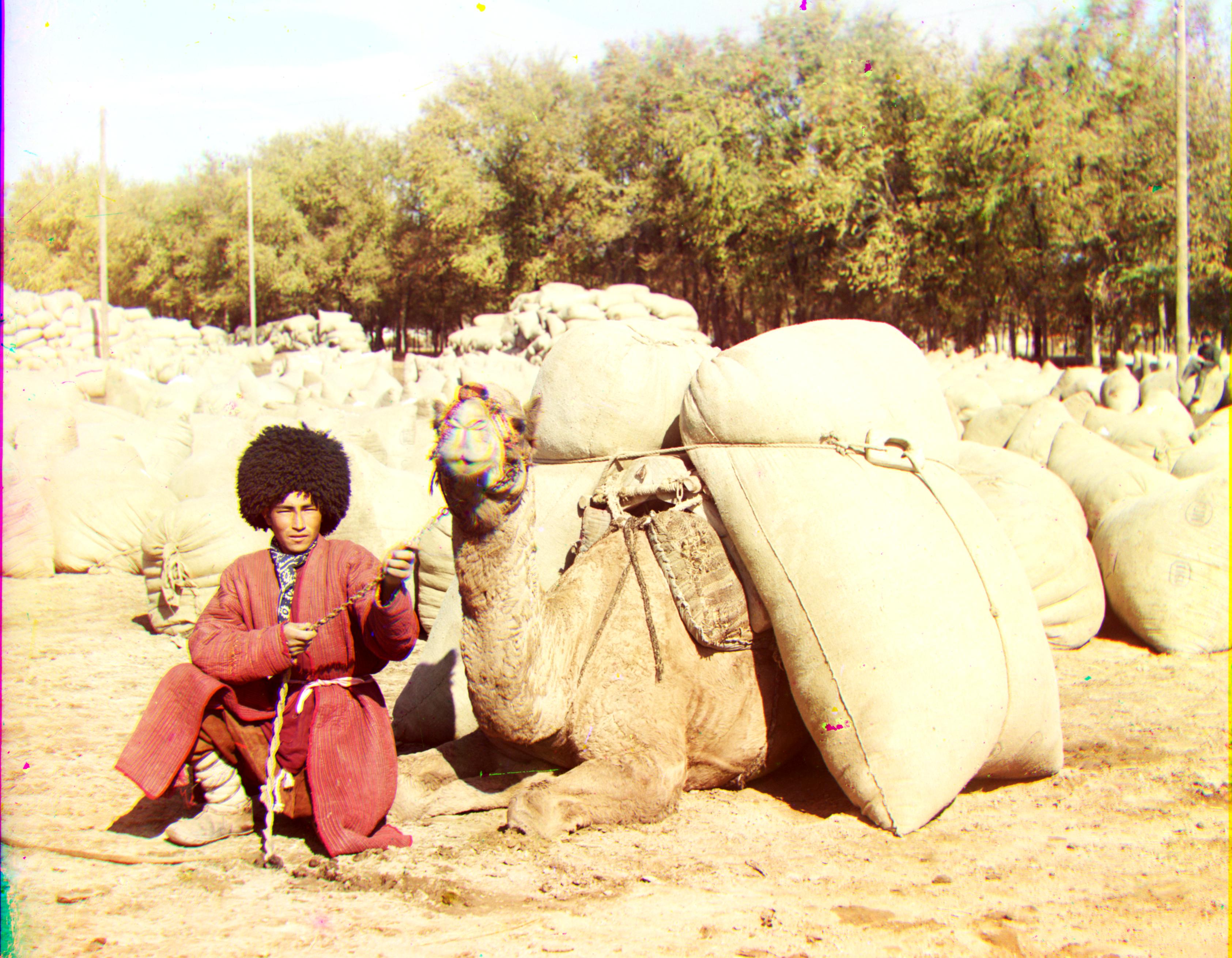

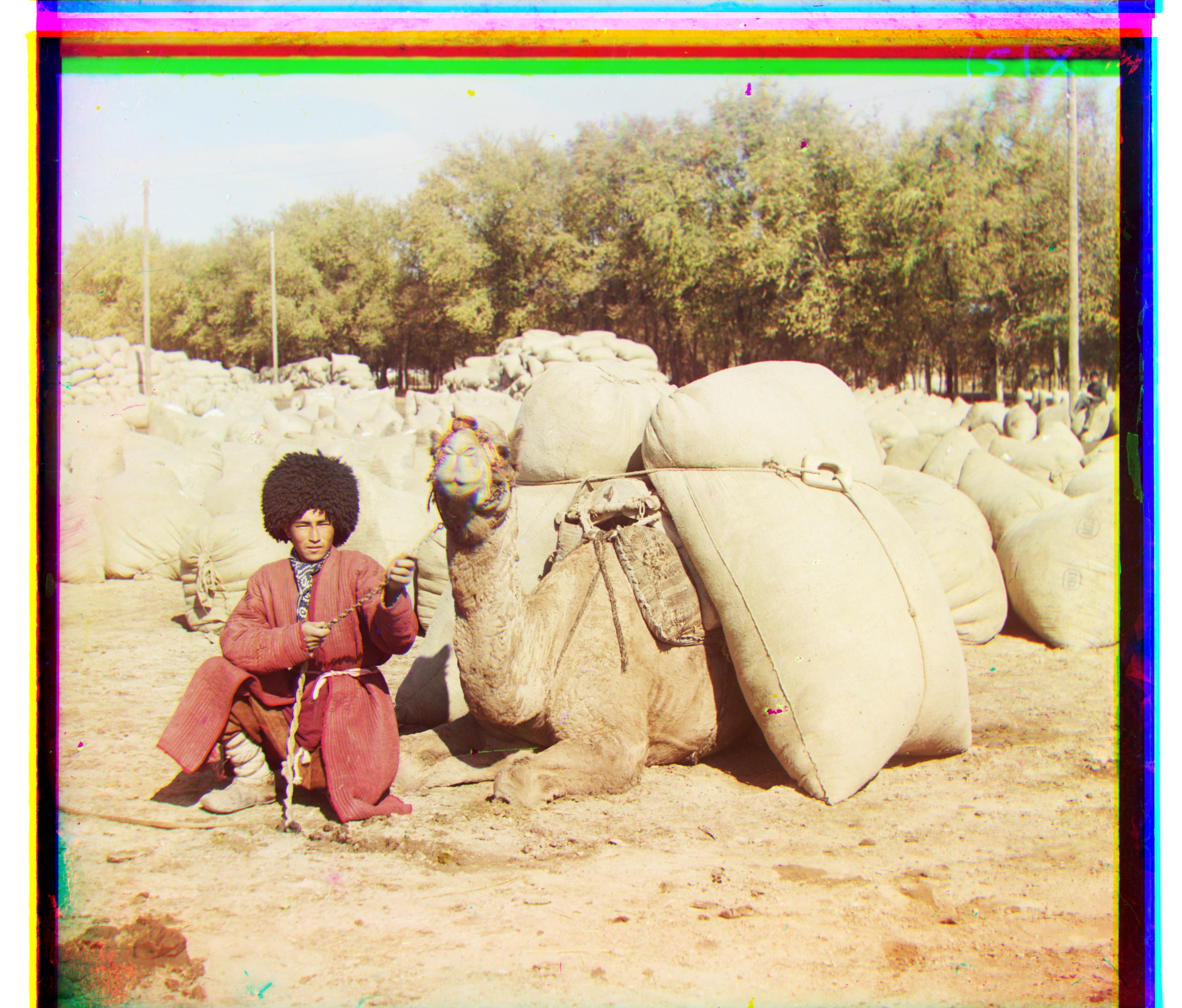

Upon closer inspection, many of the images have very small aberrations as a result of movement. Since all the photos were not taken at exactly the same time, colorful bursts appear whenever an element in the scene has changed position. My favorite example of this is the camel's face in the turkman image. The man held still so his face remained very sharp, but you can see that the camel moved its face slightly - leading to an aurora of red around it. You can also see the yellow silhouette of a woman mid-turn in the harvesters photo. I'm unsure what steps, if any, could be used to correct this.

Bells and Whistles 1 - Automatic Cropping

The plates we used to generate these images aren't perfect. Each color channel image has its own borders, which very rarely line up with the other channel's. I attempted to remove the solid white and black borders from each of the r, g, and b layers before combining them together. My algorithm was as follows:

For each channel in (r, g, b):

For each side in (top, bottom, left, right):

Average the values of a 1xN strip of pixels along that edge

If the average is > .9 or < .1, it is part of a white or black border, and can be cropped

Bells and Whistles 2 - Automatic Contrasting

Automatic contrasting can make certain features in an image more distinguishable to the viewer, improving image quality. Too much contrast, howerver, produces an oddly colored image. In order to update the contrast of each image, I implemented the algorithm found here. After messing around with various parameters, I decided that boosting contrast by +25 produced a decent result on all images in the input set. Below you can see the improvement on the gates image.

Complete Gallery - all effects applied